LPT: If you’re using a computer for work, One is None

About a year ago I had a conversation with a professional friend about our work arrangements at the time, and what we’d do in terms of bench setup if we each were to do full-time independent consulting and contracting. Almost immediately we unanimously agreed on having two (2) computers.

The rationale is pretty obvious: loss of use of computing tools for design and development is a major risk to revenue when you’re the invoicing party. However, being stuck in the water when your only corporate issued rig gives up isn’t fun either.

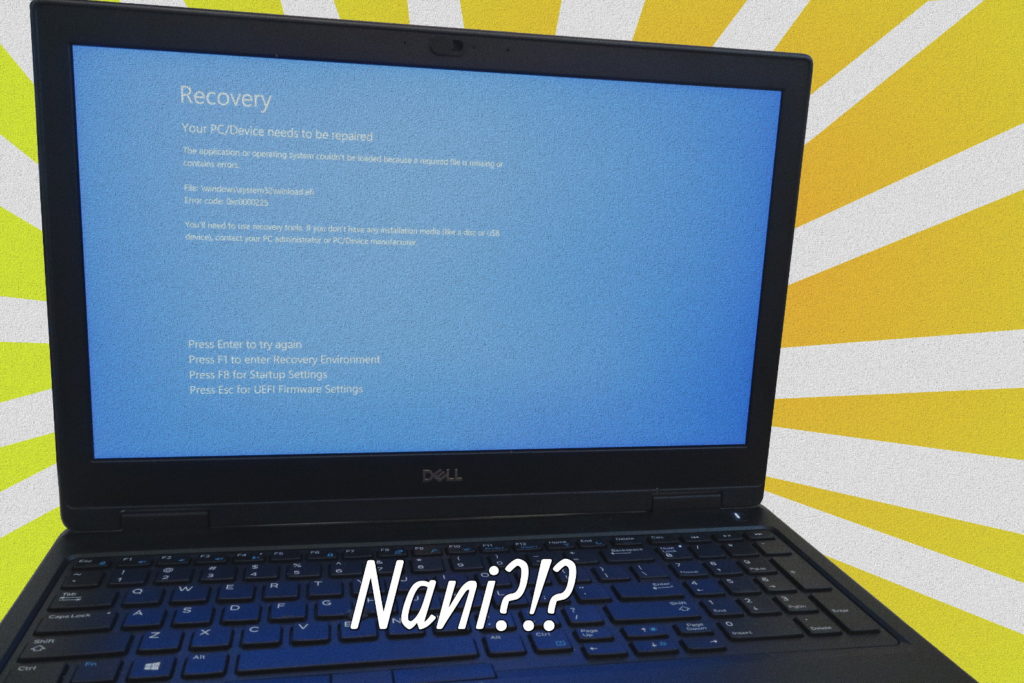

I was reminded of that think-storm session earlier this week, right in the middle of a rather intense sprint.

Luckily, this halting-on-boot laptop wasn’t the only computer I have for development, because otherwise it would have been very inconvenient in the project support, and a potentially major hassle should major servicing be required during COVID-19 shelter-in-place.

I experienced no loss to productivity because I perform most development with another computer that is my daily driver. On the table next to it is my secondary computer, which while not identically configured, can stand in with the same tool chains should need arise.

Of course, a sound data backup and recovery plan is always necessary and most people (hopefully) have that in mind, especially as some computer models tightly integrate storage modules rendering recovery difficult when the system fails to operate. Redundancy in workstations and other important tools may not often be in the forefront of thought, but is an important consideration. Even if you’re able to pick-up a new computer from the store within the hour, it may take a day or so to load up your software tools to get back to being productive.

It took me a few months to determine the most appropriate redundant systems strategy. At first, I had thought of keeping two mostly identical computers but realized quickly that one of them would most likely collect dust. Two exact setups are also potentially vulnerable to same-time failure if for example, the OS vendor pushes out a faulty automatic update.

I presently settled upon maintaining complementary platforms that are able to either host cross-platform tools natively and/or virtualize operating environments that can be replicated. This way, the computer resources all get utilized on a regular basis and have enough overlap should one fail at an inopportune time.

One thought on “LPT: If you’re using a computer for work, One is None”